The Changing Role of Women in American Society

The role of women in American society has evolved significantly over the decades, reflecting broader cultural shifts and advancements in gender equality. From the early colonial period to the present day, women’s roles have undergone profound transformations, impacting family dynamics, workforce participation, political representation, and societal expectations. This article explores the historical milestones and contemporary trends that have shaped the changing role of women in American society.

Table of Contents

Historical Context.

In colonial America, women’s roles were primarily confined to domestic duties and child-rearing within patriarchal family structures. The ideals of “Republican Motherhood” during the late 18th century emphasized women’s role in educating future citizens. However, women lacked legal rights and were excluded from most public spheres.

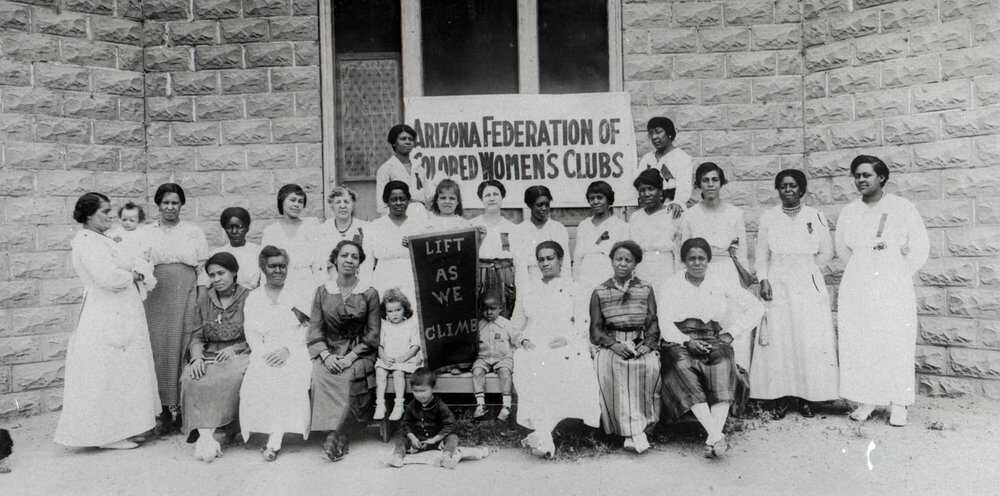

The 19th century saw the emergence of the women’s suffrage movement, advocating for political equality and the right to vote. The Seneca Falls Convention in 1848 marked a pivotal moment, leading to the eventual ratification of the 19th Amendment in 1920, granting women suffrage.

The Twentieth Century.

The 20th century witnessed significant strides in women’s liberation. The First and Second World Wars propelled women into the workforce, challenging traditional gender roles. The iconic image of “Rosie the Riveter” symbolized women’s contributions to wartime industries. Post-war, the feminist movement gained momentum, demanding equal pay, reproductive rights, and an end to gender-based discrimination.

The 1960s and 70s heralded the “second wave” of feminism, advocating for broader social and legal reforms. Title IX, enacted in 1972, prohibited sex-based discrimination in education and athletics, fostering greater opportunities for women in academia and sports.

Contemporary Trends.

In the 21st century, women have made remarkable strides in education and career advancement. More women pursue higher education and enter traditionally male-dominated professions such as law, medicine, and technology. The wage gap, though persistent, has narrowed, with increased awareness and legislative efforts promoting pay equity.

Moreover, the #MeToo movement, which gained traction in 2017, highlighted pervasive issues of sexual harassment and assault, sparking conversations about workplace culture and gender dynamics. Women’s representation in politics has also improved, with record numbers of women serving in Congress and other elected offices.

You can also Read: Technology Innovation Hubs Across the USA

Challenges and Achievements.

Despite progress, challenges persist. Women continue to face barriers to leadership positions, particularly in corporate boardrooms and executive roles. The burden of unpaid domestic labor remains unevenly distributed, impacting women’s career trajectories. Additionally, women of color and marginalized communities experience intersecting forms of discrimination, necessitating inclusive approaches to gender equity.

Nevertheless, women’s achievements are undeniable. Women’s rights advocates continue to push for legislative reforms, including paid parental leave, affordable childcare, and strengthened protections against gender-based violence.

Impacts on Society.

The evolving role of women has had profound implications for American society. Changes in family structures, with more women as primary breadwinners or dual-income households, have reshaped dynamics within homes. Greater gender diversity in workplaces has been linked to enhanced innovation and productivity.

Culturally, media representation increasingly reflects diverse female experiences, challenging stereotypes and promoting inclusive narratives. The normalization of women’s leadership contributes to broader societal shifts toward gender equality and social justice.

Conclusion.

In conclusion, the changing role of women in American society reflects a complex interplay of historical legacies, socio-economic trends, and ongoing activism. While progress has been made, achieving full gender equality remains an ongoing endeavor. The empowerment of women benefits not only individuals but enriches society as a whole, fostering greater diversity, resilience, and progress. By continuing to address systemic barriers and promote inclusivity, America can further advance toward a future where women’s rights are fully realized and celebrated.